This issue in pdf Subscription Archive: Next issue: October 2005 |

|

|||||||

Maps of Musicby Andreas Rauber, Thomas Lidy and Robert Neumayer Manually sorting and searching through directory structures is no way to enjoy music, no matter how much we can pack onto a single, tiny device. Players should be ‘intelligent’ enough to organize the music for us, allowing us to pick only what we want to listen to. Music is becoming one of the dominant goods in internet traffic and private storage, with according business models slowly starting to catch up. Yet, to select from this wealth of music, to choose which songs to listen to in a specific situation or particular mood, we are still forced to turn to cumbersome clicking, to manually sort titles into playlists, again listen only to pre-compiled albums, or to revert the dullest automatic selection method, namely random play. In order to enjoy the plethora of music available, new techniques and interfaces are required that will free us from burdensome selection of tracks. While private music collections and audio players are desperately in need of more intuitive methods of organizing and easily selecting music, commercial vendors most probably are as well. This need is evident from the popularity of currently available recommendation techniques, such as the dominant “customers who bought this album also bought this” style recommendations. Allowing customers to casually select and browse sections of vast stocks of audio tracks and discover titles they didn't know before requires a different approach. To this end we are developing methods that allow us to organize audio repositories by the way we perceive music, grouping audio tracks by their perceived acoustic similarity. The SOM-enhanced JukeBox system (SOMeJB) provides automatic indexing and organization of music repositories based on perceived sound similarity of single tracks. A map metaphor is used for visualization, with similar songs being placed into similar regions on the map.

Using a variety of feature extraction techniques, the audio signal in the form of WAV or MP3 files is analysed to extract representations that allow us to compute the perceived similarity of two pieces of music. Specifically, we use - amongst other statistical features - Rhythm Patterns, modelling the amplitude modulation frequency in different frequency bands while incorporating a range of psycho-acoustic transformations. In a two-stage feature extraction process, the specific loudness sensation in different frequency bands is first computed, and is then transformed into a time-invariant representation based on the modulation frequency. These features describe the complex rhythmic interactions that are characteristic for different musical styles.

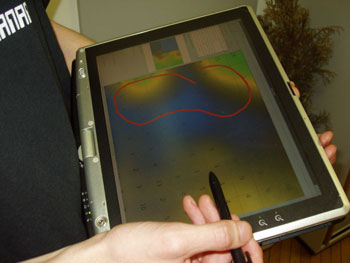

On top of this, we can apply standard information retrieval techniques to search for specific pieces of music, or for tracks from a certain musical genre. Classifiers can be trained to sort audio into pre-defined genre categories. With the SOMeJB system, the SOM-enhanced JukeBox, we go one step further by clustering individual audio tracks using a self-organizing map, allowing us to overcome traditional genre boundaries. This system provides a mapping of the high-dimensional feature spaces into a two-dimensional map space that can be conveniently explored. Different visualization techniques, such as Smoothed Data Histograms, reveal the cluster boundaries on the map and result in ‘Islands of Music’ being depicted, with each island containing music of a specific type or style. Vector field visualizations on top of these produce weather charts that help users to interpret the map, telling them where more aggressive or quieter music is located on. With the PlaySOM and the PocketSOMPlayer, we added two novel interface modules allowing us to browse a music collection by navigating a map of clustered music tracks and to select regions of interest containing similar tracks for playing. The PlaySOM system is primarily designed to allow interaction via a large, preferably touch-screen device, whereas the PocketSOMPlayer is implemented for mobile devices, supporting both local and streamed audio replay, or acting as a remote control for the audio server. Music is selected simply by marking an area on the map, or - in a slightly more sophisticated fashion - by drawing a trajectory on the map, along which music is played. This allows us to start from, say, some soft classical piano music, move on to somewhat more dynamic orchestral pieces, before returning again to softer violin pieces. Or, in a different area of the map, starting with some electronic music, moving on to more dynamic pop and rock pieces, and gradually increasing in dynamics to reach the metal area on the map. This approach allows users to quickly explore the range of music available and to effortlessly select styles of music according to their preferences, simply by selecting the appropriate areas on the map. Current work is focusing on further improvements in the feature extraction process to even better capture the perceived characteristics of audio, as well as on methods for integrating different views of and interaction possibilities with large music collections. Part of this work was supported by the European Union in the 6th Framework Programme, IST, through the Networks of Excellence ‘DELOS’ on Digital Libraries, and ‘MUSCLE’ on Multimedia Understanding through Semantics, Computation and Learning. Link: Please contact: |

|||||||