|

|||

CWI's FACS Cluster - Facilitating the Advancement of Computational Scienceby Stefan Blom, Sander Bohte and Niels Nes The Centre for Mathematics and Computer Science (CWI) performs a wide range of research. Consequently, its high-performance computing facilities must meet diverse requirements. This has led to the purchase of a cluster with an asymmetric core-edge design. Under the influence of this asymmetric structure, some cluster applications are evolving into Grid applications. Many research groups at CWI share a need for high-performance computer facilities. In the FACS (Facilitating the Advancement of Computational Science) project, three of these groups combined their resources to set up a large shared cluster. Since each user group has its own specific demands on memory, CPU power and bandwidth, the FACS cluster deviates from the more usual symmetric design. The cluster's edge serves as a compute farm. It contains several groups of machines, whose main task is to provide CPU cycles. The core consists of a small number of machines, connected by a high-bandwidth low-latency network (Infiniband) and each equipped with a large amount of memory. Currently, we have 22 (mostly dual CPU) machines in the edge and two quad AMD Opterons with 16G each in the core.

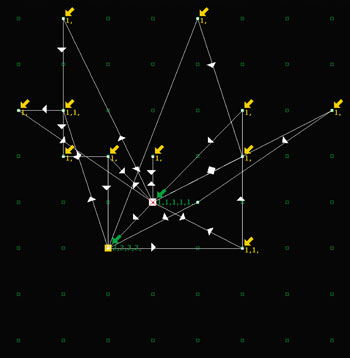

The Database Architectures and Information Access group works on database technology and needs machines with both as much memory as possible and huge, fast storage. For this type of work, the bandwidth between memory and storage is critical. Furthermore, part of the research consists of designing highly efficient systems for extremely large databases. Since the research considers typical database hardware set-ups, the large-memory fast-disk multi-CPU nodes of the cluster core are a good model for such set-ups. The Evolutionary Systems and Applied Algorithmics group is working on machine-learning algorithms and agent technology. The main issue here is sufficient CPU cycles for testing variations on adaptive algorithms and for designing and simulating (large) multi-agent systems. The third group, Specification and Analysis of Embedded Systems, works on explicit-state model checking. In model checking the task is to verify that a model of a system satisfies a property. This can be split into two steps. The first step is generating the model (a very large graph called a state space), which is a CPU-intensive task; the second step is checking if the desired property holds in the model, which is a memory-intensive task. Originally, CWI's model-checking system was set up as a cluster application. Both steps were performed on the same types of machines. The choice of an asymmetric cluster has forced the Embedded Systems group to modify the system to a more Grid-like application, which assigns a task to the part of the network best equipped to handle it. To achieve this, the verification step is separated into two stages. In the first stage, the state space is reduced modulo bisimulation. The second stage verifies the property on the reduced state space. The reduction step yields a state space that has the same properties as the original but is often an order of magnitude smaller. To exploit this, the Embedded Systems group has developed distributed reduction tools. Without a distributed reduction stage, distributed state-space generation can easily produce state spaces that do not fit into the memory of a single machine. This would make validation and reduction impossible. Reduction can be useful even when using distributed model-checking tools, as the memory use of model-checking tools grows as a function of not only the size of the state space, but also the complexity of the property. Using these tools, the work on state-space generation can be focused on the edge part of the cluster, whereas the verification step can take as much advantage as possible from the fast interconnect of the core. At the end of this evolutionary path lies a true Grid application, in which state-space generation is performed wherever there is CPU time available, and verification is performed on either a high-performance cluster or a large SMP within the grid. The main issue currently holding back a Grid solution is the amount of data that must be transferred. In the model-checking system this can be up to half the memory. For database research the bandwidth requirements are even larger. The local storage of clusters and super-computers is usually capable of dealing with these amounts of data. However, the networks connecting the various Grid locations are typically quite slow compared to the internal networks. This can result in hours of data transfer for perhaps ten minutes of computation. DATAGRID research and infrastructure should allow model checking to work on a grid. Eventually, the internal database operations considered by the database group can become a Grid topic as well, but this a more distant possibility. The computational tasks of research into machine learning and agent technology could be met with Grid solutions. However, the rapid prototyping typically involved makes a small cluster an efficient least-effort solution. As grids becomes more pervasive and transparent to use, a transition to Grid technologyis highly probable. Link: Please contact: Sander Bohte, CWI, The Netherlands Niels Nes, CWI, The Netherlands |

|||