Towards Bio-Inspired Neural Networks for Visual Perception of Motion

by Claudio Castellanos Sánchez, Frédéric Alexandre and Bernard Girau

Data from neuroscience are used to implement cortex-like maps on an autonomous robot, allowing it to detect and analyse motion in the external world.

Visual perception of motion is a major challenge in machine perception research, since it constitutes an important parameter in a wide variety of tasks: path-finding, perception of shape from motion, depth segmentation, estimation of coincidence (time to collision, time to task achievement), determination of motion direction and speed, perception of gestures, orientation and movement control and determination of the three-dimensional structure of the environment.

Recent research in neuroscience has led to a more extensive knowledge of the computational properties of individual neurons and of the interactions of neural circuits in the visual pathways. It has provided us with a solid basis for understanding how the image processing of natural scenes derives from the structure and function of real brains. One of the goals is to study the intricate relations between motor and sensory patterns that emerge during active vision.

It is now known that the detection and analysis of motion are achieved by a cascade of neural operations. This starts with the registration of local motion signals within restricted regions of the visual field. These signals are then integrated into more global descriptions of the direction and speed of object motion.

In the domain of autonomous robotics, the notion of emergence may serve as a major guideline for the integration of different individual tasks. This integration implies the capacity of the robot to manage complex and changeable environments in an autonomous way. The sturdy, adaptive and embeddable properties of both learning and computation inherent in connectionist models are thus particularly well suited here.

Until now, the strong constraints of autonomy had not been satisfied by most neural models for perceptive tasks, in particular visual ones. Our team is working to define a set of connectionist models that perform various tasks of real-time dynamic visual perception bound to autonomous robotics. It is our aim that the methods and resultant algorithms be integrated into autonomous mobile robots working in unknown and dynamic environments. This work is situated at the intersection of two of the research areas of our team: on one hand the conception of connectionist models inspired by the cerebral cortex, which will perform various perceptive and cognitive tasks for autonomous systems, and on the other hand the definition of connectionist models of computation in embedded reconfigurable circuits (to ease the satisfaction of real-time constraints).

|

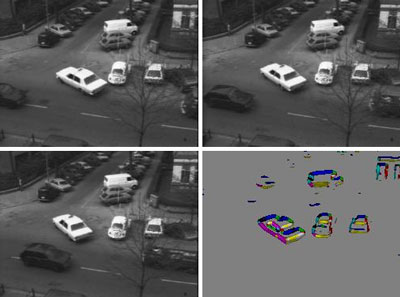

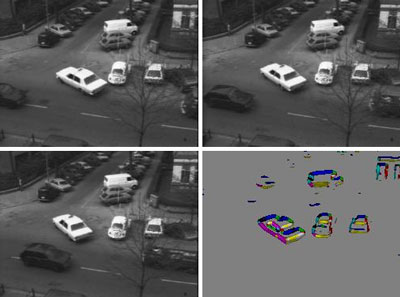

| Three images from a visual sequence and the corresponding motion extracted by the neuronal model. |

Concerning our approach to the visual perception of motion, we mainly focus on two fundamental problems in the treatment of a sequence of images. Firstly, the computation of their optical flow (a three-stage process: pre-processing based on filters, extraction of elementary characteristics and integration into a 2D optical flow), and secondly, the extraction of the egomotion. This work faces many concrete difficulties, such as specular effects, shadowing, texturing, occlusion and aperture problems. Moreover, the complexity of this task must be dealt with within the implementation constraint of real-time processing.

We are currently developing a bio-inspired neural architecture to detect, extract and segment the direction and speed components of the optical flow within sequences of images. The structure of this model derives directly from the course of the optical flow in the human brain. It begins in the retina and receives various treatments at every stage of its magnocellular pathway through the thalamus and the cortex. More precisely, our model mostly handles the properties of three cortical areas called V1, MT (middle temporal), and MST (middle superior temporal): the MT area detects patterns of movement, while spatio-temporal integration is made at the local level by V1 and at the global level by both MT and MST, so that a multi-level detection and integration may discriminate egomotion from movements of objects in a scene and from the scene itself.

Our first attempts have dealt mainly with manipulation of the optical flow by testing various spatio-temporal filters followed by neural processing based on shunting inhibitions (an example is given in Figure 1). Current efforts are looking at the definition of a model of the magnocellular pathway for the detection of the movement of one or several objects in various dynamic scenes after egomotion extraction. Further research will focus on another challenging mechanism of perception-action in autonomous robotics: the interpretation of the movements of a target in dynamic environments.

Link:

http://www.loria.fr/equipes/cortex

Please contact:

Claudio Castellanos Sánchez, Frédéric Alexandre and Bernard Girau

Laboratoire Lorrain de Recherche en Informatique et ses Applications (LORIA)/INRIA

Tel: +33 3 8359 2053

E-mail: {castella,falex,girau}@loria.fr

|