|

|||||

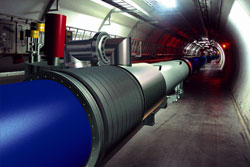

The CERN openlab for DataGrid Applicationsby Andreas Unterkircher, Sverre Jarp and Andreas Hirstius The CERN openlab is a collaboration between CERN and industrial partners to develop data-intensive grid technology to be used by a worldwide community of scientists working at the next-generation Large Hadron Collider. CERN, the European Organization for Nuclear Research, is currently constructing the Large Hadron Collider (LHC) which will be the largest and most powerful particle accelerator ever built. It will commence operation in 2007 and will run for up to two decades. Four detectors placed around the 27 km LHC tunnel on the outskirts of Geneva will produce about 15 Petabytes of data per year. Storing such huge amounts of data in a distributed fashion and making it accessible to thousands of scientists around the world requires much more than a single organization can provide. CERN has therefore launched the LHC Computing Grid (LCG), with the mission of integrating tens of thousands of computers at dozens of participating centres worldwide into a global computing resource. At the time of writing, the LCG provides about 5200 CPUs and 7.5 TB storage at 68 sites. In this context CERN established the ‘CERN openlab for DataGrid Applications’, a new type of partnership between CERN and industry. Its goal is to provide CERN with the latest industrial technology in order that it might anticipate possible future commodity solutions and adjust its Grid technology roadmap accordingly. The focus of openlab's research is to find solutions that go beyond prototyping, and thus provide valuable input to the LCG project. In this article we highlight some of our research projects.

Openlab runs a so-called opencluster comprising just over 100 Itanium-2 dual-processor HP rx2600 nodes under Linux. Several machines are equipped with 10 GbE Network Interface Cards delivered by Intel. Enterasys provided four 10-Gps ‘N7’ switches and IBM delivered 28TB of storage with its ‘Storage Tank’ file system. Openlab actively participates in data challenges run by CERN to simulate all aspects of data collection and analysis over the grid. Currently one challenge in preparation is the detection of the limits of GridFTP when sending huge amounts of data between CERN and several major Grid sites around the world. In addition we focus on an overall ‘10 Gigabit challenge’, namely, how to achieve data transfer rates of 10 Gb/s within the opencluster as well as to other sites. This involves technological issues like network-switch tuning, Linux kernel and TCP/IP parameter tuning and so on. With a view towards cluster-based grid computing we are evaluating a 12-way InfiniBand switch from Voltaire. This piece of technology allows data to be streamed into the opencluster very quickly with minimal loss of CPU cycles. One of our key goals has been to fully participate in LCG. However LCG's middleware had initially been targeted only for the x86 platform. We therefore had to port the middleware to Itanium, and for several months this became a major task at openlab. The LCG software stack consists of a special patched Globus 2.4 version provided by the Virtual Data Toolkit (VDT), middleware developed by the European Data Grid (EDG) project, and several tools delivered by the high energy physics community. We are able to provide a fully functional LCG grid node on Itanium systems including elements such as worker nodes, computing elements, the user interface and storage elements and resource brokers. Thanks to this effort, a new level of heterogeneity has been added to LCG. Being the first commercial member of LCG, HP sites at Puerto Rico and Bristol (UK) are contributing Itanium nodes to LCG with the technical help of openlab staff. Having different platforms poses new challenges to grid computing. Scientific software is usually distributed in form of optimized binaries for every platform and sometimes even tightly coupled to specific versions of the operating system. One of our projects at openlab is to investigate the potential of virtualization within grid computing by using the virtual machine monitor Xen, developed by the University of Cambridge. A grid node executing a task should thus be able to provide exactly the environment needed by the application. Another area of interest is automated installation of grid nodes. Originating from different sources, the installation and deployment of LCG middleware is a non-trivial task. We use the SmartFrog framework, a technology developed by HP Labs and given open-source status this year, to automatically install and manage LCG nodes. Of particular interest is the possibility of dynamically adding or deleting resources (ie worker nodes and storage elements) to andfrom a grid node. Earlier this year Oracle joined openlab as a sponsor. The first project within this collaboration aims at reducing the downtime of LCG's replica catalogue, which ensures correct mapping of filenames and file identifications. The catalogue runs on the Oracle database. With the help of Oracle's technology, catalogue downtime (eg for maintenance reasons) has been reduced from hours to minutes.

Openlab's core team consists of three CERN staff members, four fellows and six summer students. Plans for the future of openlab include increasing the number of nodes and upgrading the high-speed switching environment (both Ethernet and InfiniBand). The software activities will continue to focus on participation in the LHC data challenges as well as supporting LCG on the Itanium platform. Link: Please contact: |

|||||