Shape Perception of 3D Virtual Objects by Tactile Feedback Systems

by Massimo Bergamasco

The capability to touch and manipulate virtual objects according to natural patterns of hand movements is a fundamental requirement for future applications of Virtual Environment technologies. In the real world, the recognition of peculiar features, such as geometrical shape, weight, hardness, thermal conditions and surface texture are performed by exploratory procedures of the hand which are actively controlled by the user (observer) in order to maintain contact with the object. The perceptual system devoted to the recognition of such features is called 'haptics'. The sensory information exploited by the haptics system for the recognition of real objects are kinesthetic and cutaneous inputs. While kinesthetic inputs refer to the perception of the spatial configuration of the hand and fingers, the cutaneous inputs deal with the perception of the contact conditions between the human hand and the real object (cutaneous input derives primarily from tactile and proprioceptive sensors).

The availability of an interface system capable of generating adequate cutaneous stimuli on the user's hand is a primary need when the problem of the recognition of features of an object is translated from a real to a virtual environment in which the objects are simulated in the computer. Such interface systems are called haptic interfaces or haptic displays and possess functionalities:

- to record the movements of the real hand and fingers in order to replicate them in the virtual environment

- to generate contact force and tactile stimuli on the user's hand.

The user, by means of a haptic display, is able to perform the correct pattern of movement as in a real environment, while perceiving artificial cutaneous stimuli during the contact with the virtual object.

The research activity at the PERCRO laboratory of the Scuola Superiore S. Anna, Pisa, is primarily devoted to the development of complete virtual environment systems including haptic interfaces. The haptic display applications developed at PERCRO refer to experimental activities for the shape and surface texture recognition of virtual objects. We present here the interface system employed for experiments on shape and texture recognition together with the preliminary results.

The haptic display system

The complete VE system for the control of exploratory procedures of virtual objects consists of a set of software modules running on a Silicon Graphics VGX440 workstation and of a haptic display worn by the human operator. The software modules utilized are:

- modeling and real-time visualization module for the graphic representation of the virtual scenario (virtual hand, virtual object, environment)

- collision detection module to determine contact areas between the virtual hand and the virtual object

- force generation module calculating the contact force for each contact area

- tracking module for the acquisition of the hand movement data deriving from a tracking sensor and the kinesthetic sensors of the haptic display.

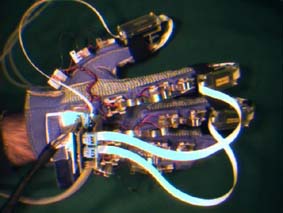

The last version of the glove-like advanced interface.

The haptic display consists of a sensorized glove with tactile effectors integrated at the level of the fingertips. The glove possesses 20 kinesthetic sensors located in correspondence with each phalanx: movements of flexion-extension and abduction-adduction can be recorded and reproduced in the VE for each finger. The tactile effectors have the functionality of generating both thermal and indentation stimuli on the fingertips. Each effector consists of a 4x4 array of microsolenoids controlled by a microcontroller to reproduce micro and macroscopic geometrical features of the virtual object; a thermal effector is integrated with the previous array and is capable of generating the adequate heat flow in correspondence of the contact area with the fingertips.

Experimental activity

Experiments have been carried out by users wearing the sensorized glove with the tactile effectors. The virtual scenario representing the virtual hand and one of the virtual objects (from simple geometrical polyhedra to complex architectural artifacts) is shown on the screen of the graphic workstation. The user controls the movements of the hand and fingers through a tracking sensor and the kinesthetic sensors of the glove, respectively. The user can start the exploration procedure of the surface shape of the virtual object by performing natural movements of the hand/fingers. The tactile effectors exploit the information coming from the collision detection module to generate the correct pattern of indentation according to the relative position of the virtual fingers on the surface of the virtual object, the relative velocity of exploration and the depth of interpenetration between the virtual object's surface and the virtual hand. The user controls the movements of his/her hand by exploiting the mechanical (indentation and thermal) stimuli generated by the haptic display.

The user can perform exploratory procedures of virtual objects by successfully recognizing geometrical features such as holes, edges, vertexes, etc. with only cutaneous and kinesthetic feedback. The same result can also be obtained when no direct visual feedback of the operation is provided. We intend to integrate force feedback capabilities into the haptic display shortly. The introduction of anthropomorphic haptic interfaces into a Virtual Environment system must be considered as a fundamental functionality for the control of operations of direct manipulation, grasping and exploration of virtual objects in the near future.

The haptic device and its application described in this paper have been developed under the Basic Research contract No. 6358 SCATIS funded by the European Union and the CNR project Conoscenze per Immagini. For more information, see: http://www-percro.sssup.it/

Please contact:

Massimo Bergamasco - PERCRO, Scuola Superiore S. Anna

Tel: +39 50 883289

E-mail: massimo@percro.sssup.it