ERCIM News No.44 - January 2001 [contents]

![]()

ERCIM News No.44 - January 2001 [contents]

by Enrico Gobbetti and Riccardo Scateni

Scientists at CRS4, the Center for Advanced Studies, Research and Development in Cagliari, Sardinia, Italy, have developed a time-critical rendering algorithm that relies upon a scene description in which objects are represented as multiresolution meshes. In collaboration with other European partners, this technique has been applied to the visual and collaborative exploration of large digital mock-ups.

When undertaking a large and lengthy engineering or architectural project, it is vital to verify frequently the possible consequences of decisions taken during the design phase. The aim of virtual prototyping research is to allow architects, engineers and designers to work on digital mock-ups which simulate the visual appearance and behaviour of objects on a computer. The expected benefits of virtual prototyping technology include: a substantial reduction in development time and manufacturing costs, thanks to a reduced need for expensive physical mock-ups; the ability to maintain digital mock-ups in sync with the design, and therefore the possibility to use them for documentation and to help the dialogue between engineers from different fields talking about the same thing, even though they may use different terms; the possibility of using mock-ups during collaborative design sessions among geographically distant partners, a very difficult option when using physical prototypes.

Time-critical Rendering

As for all virtual reality applications, virtual prototyping systems have very stringent performance requirements: low visual feedback bandwidth can destroy the illusion of animation, while high latency can induce simulation sickness and loss of feeling of control. Since virtual prototyping tools have to deal with very large dynamic graphic scenes with a complex geometric description, rendering speed is often a major bottleneck. When converted to an adequate accuracy, virtual prototypes often exceed the millions of polygons and hundreds of objects, and this poses important challenges to application developers both in terms of memory and speed.

Since the complexity of a scene from a given view-point is potentially unbound, meeting the performance requirements of the human perceptual system requires the ability to trade rendering quality with speed. Ideally, this time/quality conflict should be handled with adaptive techniques to cope with a wide range of viewing conditions while avoiding worst-case assumptions. The presence of moving parts and the need for interaction between virtual prototyping tools limits the amount of pre-computation possible, implying a run-time solution.

Time-critical rendering of scenes composed of many objects is an open research area. The traditional approach towards rendering these scenes in a time-critical setting is to pre-compute a small number of independent level-of-detail (LOD) representations of each object composing the scene, and to switch at run-time between the LODs. This technique has multiple drawbacks, both in terms of memory requirements, because of the need to store multiple level-of-details (LOD), and quality of results, because of the NP-completeness of the problem. We recently demonstrated that these drawbacks can be overcome when using appropriate multiresolution data structures (TOM, Totally Ordered Mesh) which make it possible to express predictive LOD selection in the framework of continuous convex constrained optimization.

Visual Exploration

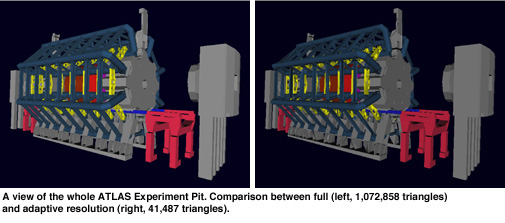

Our time-critical multiresolution scene rendering algorithm has been implemented and tested on both UNIX and Windows platforms. The TOM data structure is integrated in a collaborative virtual prototyping system developed in the framework of the ESPRIT project CAVALCADE (#26285, 1/1998-2/2000). All software modules are integrated in an in-house walkthrough application. We experimented with the system using multiple massive datasets, including the digital model of a very large machine (ATLAS) that is under construction at CERN in Geneva. When completed, this will be the largest machine for High Energy Physics in the world.

The results obtained suggest that our approach can be usefully employed for visual analysis at an early stage in the design phase of complex engineering or architectural models. It can handle scenes totaling millions of polygons and hundreds of independent objects on a standard graphics PC. In a controlled environment, it guarantees a uniform, bounded frame rate even for widely changing viewing conditions. The technique does not rely on visibility preprocessing and can be readily employed on animated scenes and interactive VR applications.

We believe that ultimately a time-critical rendering system should combine algorithms such as ours with occlusion culling, image-based rendering and other acceleration methods. The system should automatically partition the scene, choosing the most appropriate methods for different sets of objects based on cost/quality considerations and algorithm constraints. All these technologies have to be integrated in the same rendering context. This aspect is often neglected in current research. Integration is made more complex by the fact that dynamic LOD and visibility culling often have different time constraints.

Links:

http://www.crs4.it/vvr

Please Contact:

Enrico Gobbetti - CRS4

Tel: +39 070 2796 1

E-mail: gobbetti@crs4.it