ERCIM News No.44 - January 2001 [contents]

![]()

ERCIM News No.44 - January 2001 [contents]

by Samuel Boivin and André Gagalowicz

Several techniques have been recently developed for the geometric and photometric reconstruction of indoor scenes. The MIRAGES team at INRIA-Rocquencourt designed a new analysis/synthesis method that can recover the reflectances of all surfaces in a scene, including anisotropic ones, using only one single image taken with a standard camera and a geometric model of the scene.

The first step of the technique developed by MIRAGES is to capture a single photograph of the scene that we want to reconstruct. The second step is to position the 3D model (including the light sources), that can be built manually using the real image, thanks to a classical modeler such as Maya (Alias|Wavefront). Once the parameters of the camera (position, orientation, ...) have been automatically recovered, our image-based rendering software may recover a full BRDF (Bi-directional Reflectance Distribution Function) of all the objects in the scene. Starting with a first assumption about the reflectances of these surfaces, we generate a computer graphics image using an advanced photorealistic rendering software, called Phoenix, developed by our team. Phoenix is a global illumination renderer that can produce photorealistic images in a very short time (faster than current radiosity software). Phoenix takes advantage from the internal architecture of the computer, to greatly accelerate the process of image generation.

The first image created by Phoenix using the initial hypothesis about the reflectances of the objects, is directly compared to the real one applying a difference or a more complex operation. This error image is then used to correct the estimated reflectances until the result synthetic image becomes as close as possible from the real one. Our image-based rendering technique is iterative because it iteratively corrects the BRDF of the 3D surfaces, and it is hierarchical because it proceeds with more and more complex assumptions about the photometry of the objects inside the scene. If a particular reflectance property has been tried for a surface, and if it produces an image that still has important errors on all the surface area, this reflectance is automatically forced to be a more complex one (perfect specular or isotropic for example). Using these new assumptions, it is now possible to create a new synthetic image that will be again compared to the real image, to assess if the error between these two images decreased. If the error for an object is smaller than a user-defined threshold, then it is confirmed that its photometry has been correctly simulated and it does not need to have a more complex BRDF. Moreover, when there are high optical and thermal interactions between two objects (suppose for example that a book is lying on an aluminium sheet and that this book is reflecting on this sheet), our technique uses several complex algorithms to estimate the reflectances of these two objects, because they simultaneously interact on one another. This is a very complex procedure that can require several minutes of computation time, depending on the complexity of the BRDF of the surface: the most complicated case that we treat is anisotropic surfaces on which highly textured objects are reflected.

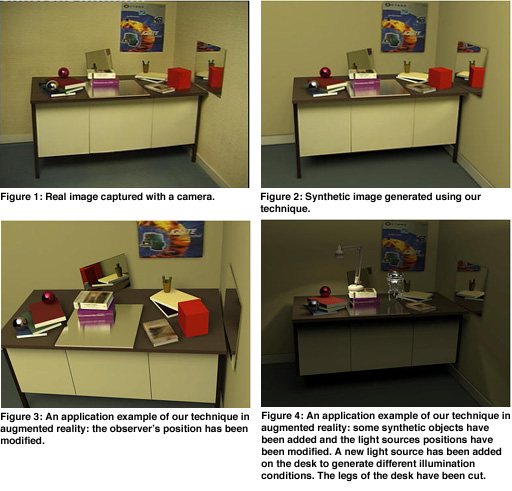

We show some results in this presentation, including a real image (figure 1) and the full (photometrically and geometrically) reconstructed synthetic image (figure 2). The mirrors and the aluminium sheet are simulated respectively as real mirrors and real complex BRDF surfaces: they are not simulated as textured objects which is the case of most of the techniques developed today. In other words, with one single image we know how to reconstruct mirrors, isotropic and anisotropic surfaces. This procedure possesses the ability, on one hand, to analyse through the mirror some objects that were not directly seen in the real image, and on the other hand, allows several industrial applications. The first application is called Augmented Reality and it is very used for special in movies in cinema and postproduction applications. Figure 3 presents an example of this application, with a modification of the viewpoint position, while figure 4 shows the same scene containing added synthetic objects under novel illumination conditions. A lot of other direct applications are possible, especially in high rate compression of video sequences (with no visible loss). Our technique can also be applied to automatic positioning of mirrors and light sources, advanced reflectance analysis of surfaces, etc.

As a conclusion, we have presented a new image-based rendering technique that is very fast and that recovers the BRDF (for diffuse, specular, isotropic, anisotropic and textured objects) of all surfaces inside a scene, including very complex ones. Our method is very efficient and needs very few data: one single image taken with a camera and a 3D geometric model (including light sources) of the scene are sufficient for the full reflectances recovery. Some future directions are currently investigated in order to develop new applications and to take into account scenes containing more complex BRDF and objects that combine both anisotropic reflections and texture properties.

Please contact:

Samuel Boivin - INRIA

Tel: +33 1 3963 5186

E-mail: Samuel.Boivin@inria.fr