Immersive Telepresence

by Vali Lalioti, Frank Hasenbrink and Olaf Menkens

The aim of Immersive Telepresence is to provide geographically dispersed groups of people the possibility to meet and work within projection-based Virtual Reality Systems as if face-to-face.

In our approach participants not only meet as if face-to-face,

but also share the same virtual space and perform common tasks,

in order to reach a common goal. For this purpose live stereo-video

and audio of remote participants is integrated into the virtual

space of another participant, allowing a geographically separated

group of people to collaborate while maintaining eye-contact,

gaze awareness and body language.

Participants can use a wide range of Projective Virtual Reality

Systems, such as CyberStage, Responsive Workbench, Cooperative

Responsive Workbench and Teleport, resulting symmetric or asymmetric

collaboration scenarios. The scientific approach includes stereo

camera calibration, in order to obtain the camera parameters,

which are then used for integrating the stereo-video into the

virtual space, while preserving the stereo-effect and perspective

for the tracked viewer.

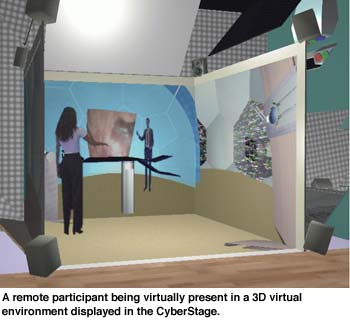

Immersive Telepresence in Cyberstage

The first prototype of the environment was demonstrated in October

1997. A remote participant captured with a stereo camera was chroma-keyed

into a 3D virtual space, being virtually present in a 3D virtual

environment displayed in the CyberStage. For the first time ever

a fully immersed 3D virtual teleconference was demonstrated based

on virtual studio techniques of keying video images into computer

generated scenes.

The imported image sequences had to pass several Silicon Graphics

video options, delay units and chroma keyers before taken as a

dynamic texture into the AVOCADO Software Framework. AVOCADO handled

the positioning and display of the remote participant within the

virtual world of an operation theater. The remote participant

gave instructions to CyberStage visitors on how to operate various

devices and instruments of this virtual operation theater.

Cyberstage and Immersive Telepresence

In integrating the live audio of the remote participant the spatial

audio functionality of AVOCADO was used (see previous article). The live audio was attached as an audio source to the geometry

representing the live stereo-video in the virtual world. Therefore,

audio from the remote participant was spatialized, and was increasing

in volume or fading away in direct response to moving nearer or

further away from the video image of the remote participant in

CyberStage. This greatly enhances the immersive telepresence effect.

Immersive Telepresence at the Cooperative Responsive Workbench

The Cooperative Responsive Workbench extends the Responsive Workbench

with a vertical screen, thus enlarging the viewing frustum and

allowing remote collaboration and Immersive Telepresence.

On the vertical screen of the Cooperative Responsive Workbench

a user could see and communicate in real-time with a person or

team located at a different place, and at the same time view and

manipulate 3D stereoscopic virtual objects (ie. the model of a

car, seismic data for mining, medical data of a patient etc).

Immersive telepresence can be used in a variety of application

areas such as medical and geoscience applications (see article in ERCIM News No.37).

Please contact:

Vali Lalioti - GMD

Tel: +49 2241 14 2787

E-mail: vasiliki.lalioti@gmd.de

Frank Hasenbrink - GMD

Tel: +49 2241 14 2051

E-mail: frank.hasenbrink@gmd.de

Olaf Menkens - GMD

Tel: +49 2241 14 2362

E-mail: olaf.menkens@gmd.de